AI Scraping Concerns Put the Web Archive at Risk

Major news publishers have started blocking the Wayback Machine over fears of AI training data collection. The Internet Archive argues this will erase public historical records, not stop AI.

TL;DR

Major news publishers have begun blocking the Internet Archive's (Wayback Machine) crawler, citing concerns over unauthorized AI training data collection. The Internet Archive contends that it is a public digital library — not an AI scraping tool — and warns that these blocks will result in the loss of historical records.

This post is a summary and review of two articles from Nieman Lab and Techdirt. You can read the full article via the link below.

- Nieman Lab, "News publishers limit Internet Archive access due to AI scraping concerns"

- Techdirt, "Preserving The Web Is Not The Problem. Losing It Is."

Publishers Limiting Access (Nieman Lab)

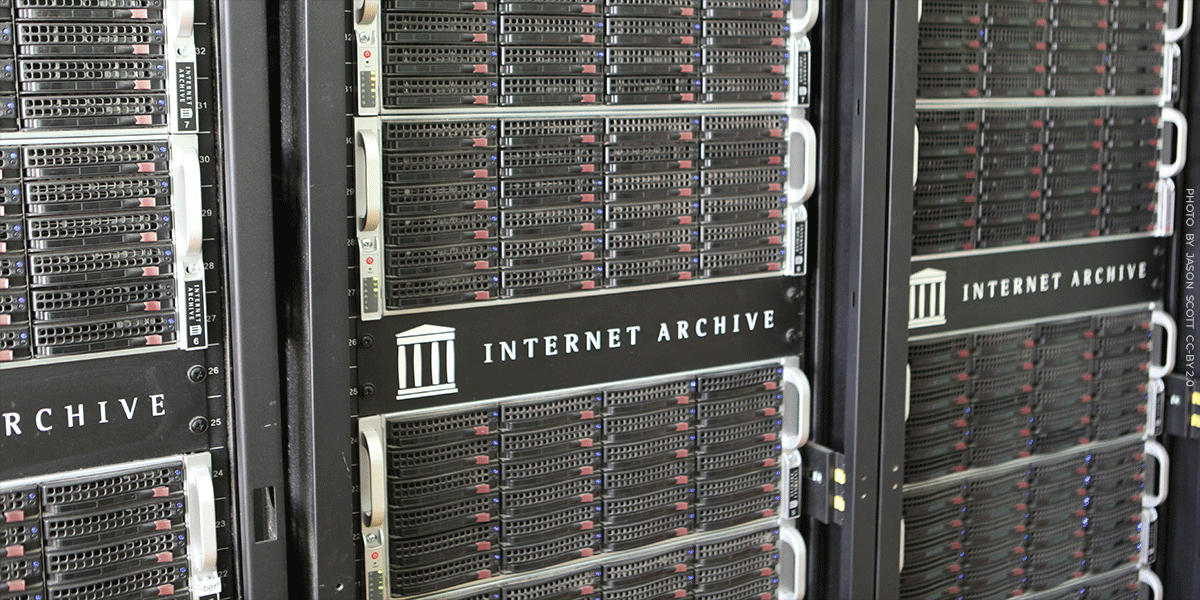

As AI bots scavenge the web for training data, the Internet Archive's commitment to free information access has turned its digital library into a potential liability for some news publishers.

The Guardian found through its access logs that the Internet Archive was a frequent crawler of its content. It subsequently took steps to exclude itself from the Internet Archive's APIs and filter out its article pages from the Wayback Machine's URL interface. Robert Hahn, head of business affairs and licensing, stated: "A lot of these AI businesses are looking for readily available, structured databases of content. The Internet Archive's API would have been an obvious place to plug their own machines into and suck out the IP." That said, The Guardian stopped short of a full block, citing support for the nonprofit's mission to democratize information.

The New York Times confirmed it is actively "hard blocking" the Internet Archive's crawlers. By end of 2025, it had added archive.org_bot to its robots.txt file. A Times spokesperson said: "We are blocking the Internet Archive's bot from accessing the Times because the Wayback Machine provides unfettered access to Times content — including by AI companies — without authorization." The Financial Times and Reddit took similar measures; Reddit stated it had confirmed instances where AI companies violated platform policies by scraping data from the Wayback Machine.

Nieman Lab analyzed the robots.txt files of 1,167 news sites (exploratory data; 76% of sites are U.S.-based) and found that 241 sites across nine countries explicitly disallow at least one of the Internet Archive's four crawling bots. 87% of those sites are owned by USA Today Co. (formerly Gannett), which implemented the blocks across its properties in 2025. Of the 241 sites, 240 also disallow Common Crawl — another nonprofit web preservation project — suggesting the blocking trend extends beyond the Internet Archive alone.

Internet Archive founder Brewster Kahle warned that "if publishers limit libraries, like the Internet Archive, then the public will have less access to the historical record." Robert Hahn acknowledged the situation as "the law of unintended consequences: You do something for really good purposes, and it gets abused."1

The Internet Archive's Pushback (Techdirt)

In response, Wayback Machine Director Mark Graham wrote in Techdirt that publishers' concerns were "understandable, but unfounded." He emphasized that the Wayback Machine is a 501(c)(3) nonprofit public library and a federally designated library of record that has been operating since 1996 — not a tool designed for large-scale commercial scraping. He noted that the Internet Archive actively prevents malicious access through rate limiting, filtering, and monitoring, and responds promptly when new scraping patterns are detected.

Graham identified the consequences of blocking as the more serious problem. If the archive is cut off, the public loses access to historical records, journalists lose a tool for fact-checking past content, and researchers lose primary digital sources. Techdirt's tech policy writer Mike Masnick warned that if quality journalism is removed from the archive, "the historical record will be skewed against quality journalism."

The Internet Archive added that it is currently working with publishers to find technical solutions that address their concerns without erasing the historical record.2

Comment

Both sides have their own logic. That said, for publishers, the motivation may involve not just AI scraping concerns but also the very real interest in maintaining their paywalls.

The effectiveness of these measures is also questionable. AI companies operating large IP pools cannot realistically be stopped by robots.txt alone — the file carries no legal enforcement power, and there are various workarounds such as ignoring it outright or spoofing the User-Agent header. The ones most likely to be harmed by these blocks are not AI companies, but everyday users and researchers who rely on the archive for study and documentation purposes. In fact, it was only after coming across this issue that I understood why Wayback Machine wasn't properly capturing Reddit pages with comments intact.

Personally, I stand on the side of the Internet Archive. While major publishers' content is unlikely to disappear in the short term, there is simply no adequate substitute for a single space where scattered materials can be accessed chronologically. I hope both sides reach a reasonable solution before this trend spreads to more publishers — and that the Internet Archive continues to exist as an open preservation space for everyone.

Further Reading

For additional perspectives on this issue, the following resources are recommended.

- Reddit — r/Archivists : Reactions and on-the-ground opinions from the archivist community.

- EFF (Electronic Frontier Foundation) : Taking a similar stance to the Techdirt piece, the EFF argues that blocking the Internet Archive does not stop AI — it erases the web's historical record.